Most “parallel” agent frameworks aren’t. They run tasks concurrently on the same Python process, share memory, share filesystem, share environment variables — and the moment two tasks touch the same path or the same global, you’re back to single-threaded reality with extra debugging.

We took the opposite bet. Every task an Eluu colleague spawns runs in its own Daytona sandbox — its own machine. Not a thread. Not a coroutine. A fresh, isolated computer with its own filesystem, its own network namespace, its own resource budget. When 50 colleagues are working on 200 tasks at once, those are 200 actual machines, not 200 callbacks fighting over the same event loop.

This post explains why we did it, how it works, what it cost us, and the failure modes we hit on the way.

TL;DR

- Async ≠ parallel. Concurrency on one process gives you the appearance of parallelism while every task fights for the same memory, filesystem, and CPU. Real parallelism requires real isolation.

- Every Eluu task = one Daytona sandbox. Tasks are computers, not coroutines. Each gets its own filesystem, network, packages, and resource budget. We provision through Daytona’s API.

- Colleagues run in parallel; tasks within a colleague run in parallel too. Two tiers of fan-out:

colleague_count × tasks_per_colleague. - The win: zero state corruption between tasks, true failure isolation, no noisy-neighbor problems, and per-task resource budgets that actually mean something.

- The cost: cold-start latency, dollars-per-sandbox, distributed-system debugging, and a whole new class of “how do tasks share state” problems we had to solve from scratch.

What “true parallel” actually means

When most agent frameworks say “parallel”, they mean one of three things:

asyncio.gather— N coroutines on one event loop. Single Python process, single OS thread, shared everything.- Thread pools — N threads in one process, fighting the GIL. Marginal speedup for I/O, no speedup for CPU.

- Process pools — N OS processes on one machine. Better, but they still share the host’s filesystem, network namespace, and resource pool.

None of these are true parallelism for AI agents. The reason: agent tasks routinely write files, install packages, mutate environment variables, hit rate limits per IP, and corrupt each other’s working state in ways the framework can’t predict. The first time two tasks pip install conflicting versions of the same package into a shared venv, you stop trusting concurrency to be safe.

True parallelism means two tasks cannot, by construction, see each other’s state.

That’s the standard we hold ourselves to. And the only way to hit it is full machine-level isolation per task.

Why Daytona, specifically

We evaluated four options before landing on Daytona:

- Docker containers — fast to provision, but our tasks regularly need to install language toolchains, mount volumes, and call out to system tools. Container-on-host gets us network isolation but not enough OS-level isolation for our threat model.

- Firecracker microVMs — strictly better isolation, but we’d be running and maintaining the orchestration ourselves.

- Kubernetes Jobs — reasonable for batch, miserable for interactive agent tasks where the whole sandbox needs to be addressable for the colleague to inspect intermediate state.

- Daytona — API-driven sandbox provisioning, fast cold starts (sub-second on warm pools), per-sandbox resource budgets, and a clean lifecycle: create → run → snapshot → destroy.

Daytona wasn’t the cheapest. It was the one we didn’t have to operate.

How a parallel task actually runs

A simplified view of what happens when a colleague spawns a task:

type Task = {

id: string;

colleague: ColleagueId;

intent: string; // what the task is trying to do

toolsNeeded: ToolRef[]; // determines sandbox image

budgetMs: number;

budgetMemoryMb: number;

};

async function runTask(task: Task) {

// 1. Provision an isolated machine. Not a thread. A computer.

const sandbox = await daytona.create({

image: pickImage(task.toolsNeeded),

cpu: 2,

memoryMb: task.budgetMemoryMb,

timeoutMs: task.budgetMs,

});

try {

// 2. The task's entire execution lives inside this sandbox.

const result = await sandbox.exec({

cmd: 'eluu-agent',

args: ['--task', task.id],

env: scopedEnvFor(task),

});

return result;

} finally {

// 3. Tear down. Nothing leaks back to the host or to sibling tasks.

await sandbox.destroy();

}

}Two things matter here. First, the sandbox is the unit of work — not the task data, not the prompt. Second, nothing the task does survives the finally block unless the colleague explicitly persists it back through Eluu’s memory layer (which we wrote about in agent memory architecture).

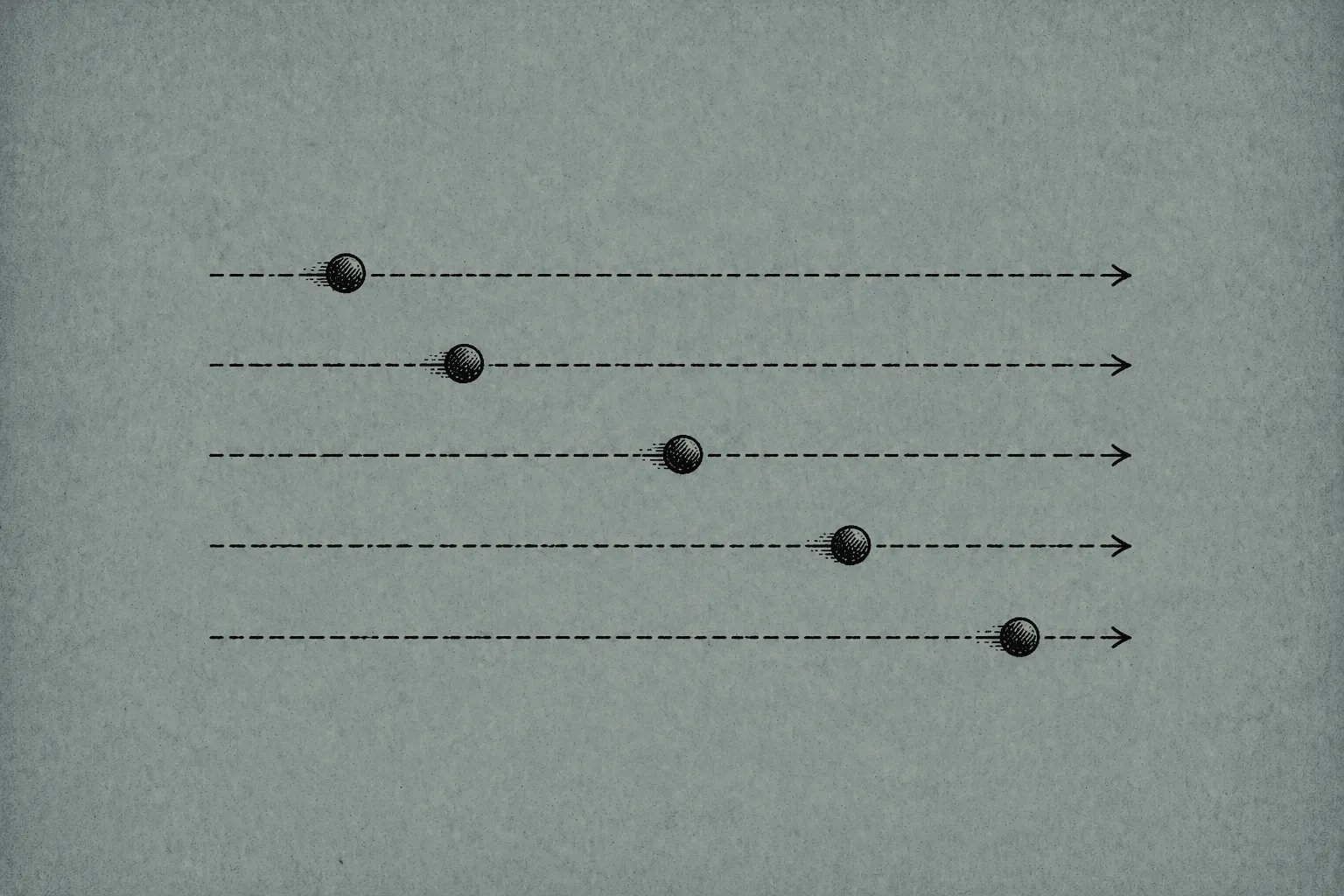

Two tiers of parallelism

Most frameworks give you one tier: “N agents running at once.” We expose two:

- Colleague-level parallelism — Lisa, Mark, Casie, and Ruby can all run at the same time, on different sandboxes, with no awareness of each other’s work except through shared memory.

- Task-level parallelism inside a colleague — When Lisa needs to qualify 30 inbound leads, she spawns 30 sandboxes in parallel and merges results. The colleague is the orchestrator; the tasks are the workers.

So colleague_count × tasks_per_colleague is the real concurrency surface. With 50 colleagues each capable of 8 parallel tasks, that’s 400 simultaneous machines. Not 400 coroutines. Four hundred actual sandboxes.

The advantages we got

In rough order of how much they mattered:

- Zero state corruption between tasks. Two tasks cannot collide on a file path, a package version, a memory address, or an environment variable. By construction.

- Failure isolation. One task crashing does not affect siblings. A segfault, an OOM, an infinite loop — all contained inside the sandbox. The colleague sees a failure code and continues.

- Per-task resource budgets that actually bite. When we say “this task gets 2 CPU and 4GB”, the sandbox enforces it. There’s no “well, technically it’s sharing memory with three other tasks.” It isn’t.

- No noisy-neighbor problems. A task that pegs CPU for 60 seconds doesn’t slow down the rest of the workspace.

- Reproducibility for debugging. Replaying a production failure means provisioning the same image with the same inputs. The sandbox itself is the deterministic substrate.

- Security boundary alignment. A task that gets prompt-injected into running a hostile command can damage at most one sandbox. The blast radius is one ephemeral machine.

The disadvantages we paid for

In equally rough order:

- Cold-start latency. A fresh sandbox isn’t free. We pay 200–800ms per provision even with warm pools, which adds up when a colleague is fanning out 30 tasks. We keep a small pre-warmed pool per common image to amortize.

- Cost. Sandboxes cost real money. Our finance dashboard has a tile labeled “active sandboxes” and another labeled “sandbox-hours per workspace per day.” Without per-task budgets and aggressive teardown, this would be ruinous.

- Distributed-system problems show up earlier. State sharing now requires explicit IPC — through our memory layer, our event log, or scoped object storage. We trade local mutability for distributed coordination.

- Debugging across many sandboxes is harder. A bug that involves three sandboxes talking via shared memory now involves three sandboxes talking via the event log, and you have to reason across all three logs.

- Image bloat. Every new tool a colleague might need has to be in the image, or we pay an install cost per task. We maintain a small matrix of base images and pick at provision time based on

toolsNeeded. - Networking is a discipline. Tasks that need to call external APIs need their own outbound rules, their own retry budgets, and their own rate-limit accounting. The host’s IP is not the task’s IP.

The cost of per-task isolation is paid in latency, cost, and debugging complexity. The cost of not having it is paid in incidents.

Failure modes we’ve actually hit

Three classes of failure that surprised us:

- Sandbox leak. Early on, a code path that errored before the

finallyleft sandboxes running. We caught it when the active-sandboxes tile spiked at 3am. Fixed by moving teardown into a separate reaper process that watches for orphans. - Image drift. A colleague’s task started failing because the base image had been silently updated and a Python package version moved. Now image versions are pinned per colleague and rollback-able.

- Cross-task assumption. A colleague was implicitly relying on two tasks running on the same machine — caching tokenizer state in

/tmpand expecting the second task to find it. Worked locally; broken in production. We added a lint rule against/tmpreliance.

What we’d do differently if starting over

- Build the warm-pool layer on day one. Provisioning latency is the single biggest tax on parallel agents and the easiest to amortize. We treated it as an optimization for too long.

- Standardize on one image format from the start. We have three image lineages because we evolved them under pressure. Consolidating now is annoying.

- Make per-task observability a first-class output. Each sandbox should emit its own structured trace, joinable by task ID. We bolted this on; it should have been native from sandbox-create-time.

FAQ

Are Eluu agents truly parallel, or just async? Truly parallel. Each task runs in its own Daytona sandbox — a separate machine with its own filesystem, network namespace, and resource budget. Tasks cannot, by construction, see each other’s state.

What is Daytona, and why do you use it? Daytona is an API-driven sandbox provisioning platform. It gives us isolated, ephemeral machines per task with sub-second cold starts on warm pools, and lets us avoid operating the orchestration layer ourselves. We chose it after evaluating Docker, Firecracker microVMs, and Kubernetes Jobs.

How is one-sandbox-per-task different from running threads or coroutines? Threads and coroutines share memory, filesystem, and process state. Sandbox-per-task gives each task its own everything. The blast radius of a failed task is one machine, not the entire colleague.

What happens when a task crashes? The sandbox is destroyed. The colleague sees a failure code and decides whether to retry, fall back, or surface the failure. No sibling task is affected. No host-side state is corrupted.

How many parallel tasks can a colleague run? Limited by the workspace’s per-colleague concurrency budget (default 8) and the workspace’s total sandbox budget. The hard limit is set by Daytona’s account quota, not by Eluu’s framework.

Doesn’t running a separate machine per task cost a lot? It costs more than running everything on one host. We pay it because the alternative — debugging mysterious cross-task corruption in production — costs more in engineering hours and customer trust.

If your team is shipping multi-agent systems and any of this resonates, we’d genuinely love to compare notes — reach out at eluu.ai.